- Blog

- Best deals on macbook pro today

- How to become apart of the assassin brotherhood

- Turbotax deluxe with state download 2018

- Different margins on different pages latex

- Dual booting mac os x on windows 10

- Free spyhunter download for windows 7

- H2 jdbc no suitable driver found

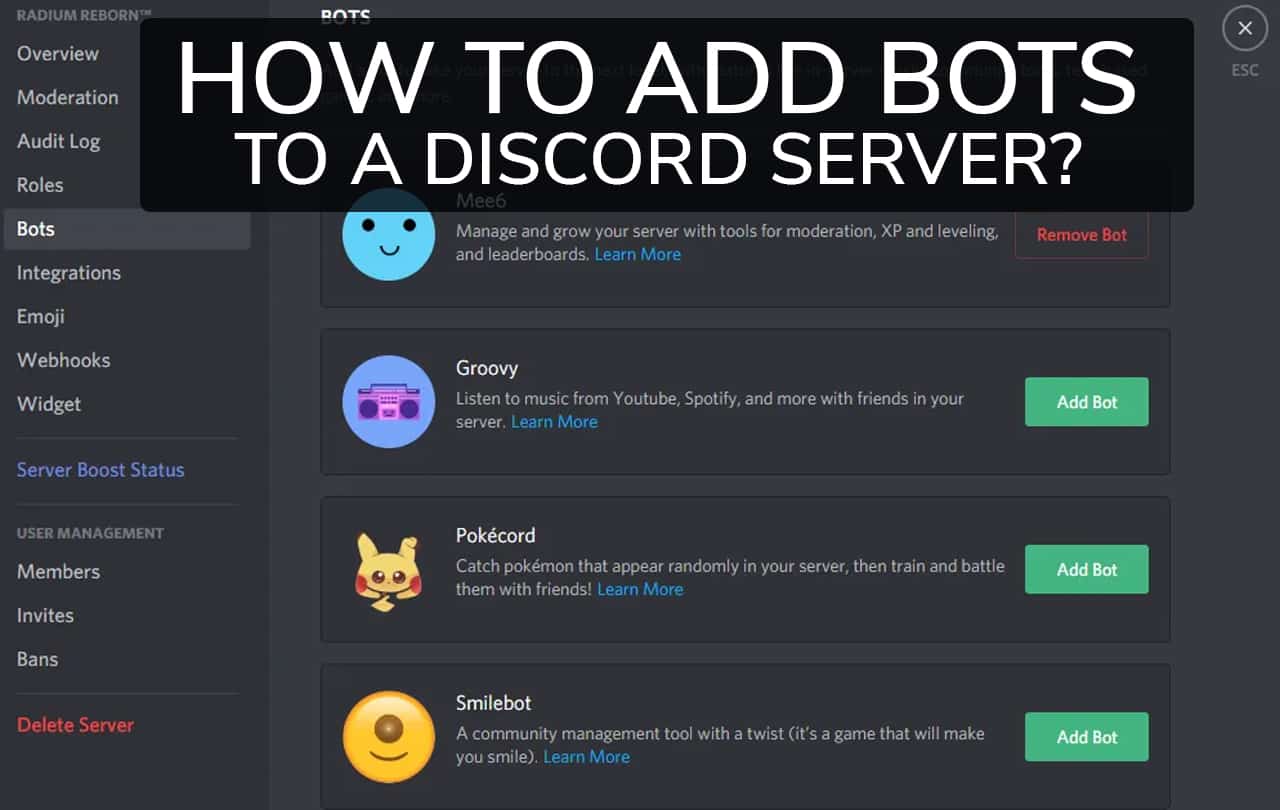

- How to create a application for discord

- Best first person rpgs on mac

- Apple mac pro 2007 service guide

- Outlook 2016 mac google calendar sync

- Free vpn client washington state

- Ms excel solver examples

- Gameguard error 114

- Final draft 7 download pc

- Spec ops the line pc freeze

- Dream daddy a dad dating simulator characters

- Cyberlink powerdirector 18 ultimate keys

- How to add the colorful reminders on mac desktop

- Amd radeon hd 7900 series drivers 2019 windows 10

- Frank ocean blonde album cover outline

- Revo uninstaller pro free license key

- Drivers ed 2 games

- Canon mp495 wireless setup windows 8

- How to install mac os on pc 2019

- Android s8- data recovery for windows 10

- Font book mac to adobe

- Free youtube to wav converter online

- Neos deck ygopro download

- HOW TO CREATE A APPLICATION FOR DISCORD HOW TO

- HOW TO CREATE A APPLICATION FOR DISCORD PROFESSIONAL

- HOW TO CREATE A APPLICATION FOR DISCORD FREE

This approach uses AI to flag inappropriate content the moment it’s created and prevent it from ever surfacing. Post-moderation scales better, but forces your users to consume potentially offensive or disturbing media.

You see this almost everywhere (YouTube, Facebook, Instagram, and many more).īoth of these approaches clearly come with drawbacks–pre-moderation requires a large human moderation team, and doesn’t work for real-time applications (chat, or any type of streaming). Instead, the job of flagging posts usually gets crowdsourced to users, who are able to “flag” or “report” content they believe violates a site’s TOS.

HOW TO CREATE A APPLICATION FOR DISCORD FREE

Don’t worry if you’ve never done any machine learning before–we’ll use the Perspective API, a completely free tool from Google, to handle the complicated bits.īut, before we get into the tech-y details, let’s talk about some high-level moderation strategies.

HOW TO CREATE A APPLICATION FOR DISCORD HOW TO

In this post, I’ll show you how to build your own AI-powered moderation bot for the chat platform Discord. For these applications, machine learning can really help. Plus, since most apps aren’t public forums like Facebook or Twitter (where we have strong expectations of free speech), the consequences of being too harsh or conservative in filtering risky content are lower.

HOW TO CREATE A APPLICATION FOR DISCORD PROFESSIONAL

You probably can’t share any sort of nudity or gore or hate speech on a professional networking app or an educational site for children.

But in many more instances, and for many more platforms, bad content is easy to spot. Some policy questions, like what to do with the President’s tweets or how to define hate speech, have no right answer.

Or do they? Can an AI handle moderation instead? It’s a dirty job, but someone’s got to do it. As RadioLab put it in their excellent podcast episode on the topic, “How much butt is too much butt?” Questions like these are tough enough, and then, if you’re Twitter, you have to decide what to do when the President’s tweets violate your Terms of Service. The moderation team there was responsible for the near-impossible task of drawing the line between which messages counted as riské flirtation (usually ok), illicit come-ons (possibly ok), and sexual harassment (which would get you banned). I’ve been fascinated by the topic of moderation–deciding who gets to post what on the internet–ever since I started working at the online dating site OkCupid, five years ago. In this post, I’ll show you how to build an AI-powered moderator bot for the Discord chat platform using the Perspective API.